DelFly

The DelFly is a bio-inspired drone that flies by flapping its wings. Flapping wing drones are currently much less well understood than rotorcraft or fixed wing drones. A main reason for this is that the aerodynamic principles underlying the generation of lift and thrust are very different – flapping wing drones rely on unsteady aerodynamic effects in order to fly. In the DelFly project, we study various aspects of flapping wings, studying the unsteady aerodynamics, investigating a control model that maps control commands to subsequent motion dynamics (system identification), exploring different designs and materials, and creating an artificial intelligence that will make these light-weight drones fly completely by themselves.

In 2018, we presented the “DelFly Nimble”, a flapping wing drone that steers with its wings and is able to perform very agile maneuvers. We have used it to study the dynamics and control of escape maneuvers, as are performed by fruit flies. Despite the 55x difference in scale, the dynamics of the DelFly and fruit fly were so similar, that it allowed us to provide an explanation for the motion of fruit flies. The findings of this study were published in Science (see below).

Videos

Selected publications:

(2022), Wang, S., Olejnik, D., de Wagter, C., van Oudheusden, B., de Croon, G.C.H.E., and Hamaza, S. Battle the wind: Improving flight stability of a flapping wing micro air vehicle under wind disturbance with onboard thermistor-based airflow sensing. IEEE Robotics and Automation Letters, 7(4), 9605-9612.

(2020), de Croon, G.C.H.E., Flapping wing drones show off their skills. in Science Robotics 5, eabd0233. (article)

(2018), Karásek, M., Muijres, F. T., De Wagter, C., Remes, B.D., and de Croon, G.C.H.E. A tailless aerial robotic flapper reveals that flies use torque coupling in rapid banked turns. Science, 361(6407), 1089-1094. (pdf – author version, original version). – Study in which we use the DelFly Nimble to better understand fruit fly escape maneuvers.

(2014), De Wagter, C., Tijmons, S., Remes, B.D.W., and de Croon, G.C.H.E., “Autonomous Flight of a 20-gram Flapping Wing MAV with a 4-gram Onboard Stereo Vision System”, at the 2014 IEEE International Conference on Robotics and Automation (ICRA 2014).(draft – pdf) – First fully autonomous flight of the DelFly, in which it avoids obstacles with onboard stereo vision.

(2012), de Croon, G.C.H.E., M.A. Groen, De Wagter, C., Remes, B.D.W., Ruijsink, R., and van Oudheusden, B.W. “Design, Aerodynamics, and Autonomy of the DelFly”, in Bioinspiration and Biomimetics, Volume 7, Issue 2.(draft – pdf) (Bibtex) – Overview article of the DelFly

Swarms of tiny autonomous drones

Whereas a single insect has very limited capabilities, a swarm of insects is able to perform complex tasks, like finding the shortest path to a food source. Inspired by this, it is expected that small robots will also be able to perform more complex tasks when they work together in swarms.

In my research, I work towards autonomous swarms of tiny drones that are able to navigate in unknown environments only with their onboard resources. In 2019, we have finished a project in which a swarm of tiny drones have to explore an unstructured, indoor environment (like a disaster area). The drones are small quadrotors, with a weight of 33 grams and a diameter of 10 cm. Their low weight and relatively low speed make the drones inherently safe for people, while the small size allows them to navigate even in very narrow indoor spaces. In 2021, we added gas sensors to the drones, which allowed them to collaborate for localizing gas leaks in unknown, cluttered environments.

The fundamental scientific challenge in creating autonomous swarms derives from the small size of the drones. It entails strict limitations in onboard energy, sensing, and processing, ruling out state-of-the-art approaches to autonomous control and navigation. Instead, we have developed efficient solutions for: (1) low-level navigation such as obstacle avoidance or flying through narrow corridors, (2) high level navigation allowing to reach places of interest, and (3) coordination with the other drones to explore the environment efficiently. Specifically, in 2019, we presented the “Swarm Gradient Bug Algorithm” (SGBA) in an article in Science Robotics. This algorithm allows a swarm of tiny Crazyflie drones to fly away from a base station, explore an area, and then come back to a wireless beacon at the base station.

Selected publications:

(2021), Duisterhof, B. P., Li, S., Burgués, J., Reddi, V. J., & de Croon, G. C. H. E. Sniffy bug: A fully autonomous swarm of gas-seeking nano quadcopters in cluttered environments. In 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) (pp. 9099-9106). IEEE.

(2020), Coppola, M., McGuire, K.N., De Wagter, C., and de Croon, G.C.H.E., A Survey on Swarming With Micro Air Vehicles: Fundamental Challenges and Constraints, in Frontiers in Robotics and AI 7, 18 (article)

(2019), McGuire, K. N., De Wagter, C., Tuyls, K., Kappen, H. J., & de Croon, G. C. H. E. Minimal navigation solution for a swarm of tiny flying robots to explore an unknown environment. Science Robotics, 4(35), eaaw9710. (final | author version)

(2019), McGuire, K.N., de Croon, G.C.H.E., Tuyls, K., A comparative study of bug algorithms for robot navigation, in Robotics and Autonomous Systems 121, 103261. ( final | preprint)

(2018), Coppola, M., McGuire, K.N., Scheper, K.Y.W., & de Croon, G.C.H.E. On-board communication-based relative localization for collision avoidance in micro air vehicle teams. Autonomous robots, 42, 1787-1805.

(2017), McGuire, K., de Croon, G.C.H.E., De Wagter, C., Tuyls, K., and Kappen, H.J. (2017). Efficient Optical flow and Stereo Vision for Velocity Estimation and Obstacle Avoidance on an Autonomous Pocket Drone. IEEE Robotics and Automation Letters, 2(2), 1070-1076. (pdf on arXiv)

Optical Flow

Roboticists look with envy at small, flying insects, such as honeybees or fruit flies. These small animals perform marvelous feats such as landing on a flower in gusty wind conditions. Biologists have unveiled that flying insects rely heavily on optical flow for such tasks. Optical flow captures the way in which objects move over an animal’s (or robot’s) image. For instance, when looking out of a train, trees close-by will move very quickly over the image (large optical flow), while a mountain in the distance will move slowly (small optical flow). Insects are believed to employ very straightforward optical flow control strategies. For instance, when landing they keep the flow and the expansion of the flow constant, so that they gradually slow down as they descend. Successfully introducing similar strategies in spacecraft or drones holds a huge potential, since optical flow can be measured with tiny, energy efficient sensors and the straightforward control strategies hardly require any computation.

My goal is not just to transfer findings from biology to robotics, but also to generate new hypotheses on how insects navigate in their environment. In particular, I investigate the algorithms involved in:

Optical flow determination: On a “normal” processor available on larger drones (500g) a standard optical flow algorithm can be run (e.g., FAST corner detection plus Lucas-Kanade optical flow tracking). On the tiny processors aboard our pocket drones (50g), new algorithms have to be developed in order to get a satisfying update frequency. Finally, over the past years new optical flow methods that are based on deep neural networks have been introduced. They are very successful, but what they do exactly is not yet well understood.

Flow field interpretation: Given the optical flow in the image, how can the robot extract useful information such as the flow divergence (expansion), the slope of the surface it is flying over, or the flatness of the terrain beneath the robot? Different approaches are possible here with different accuracies and amounts of processing.

Control: I analyze the nonlinear optical flow control laws theoretically in order to find better control strategies. This also means studying how to use optical flow for different tasks such as landing or obstacle avoidance.

A central belief regarding optical flow control, both in biology and in robotics, is that it does not require the robot to know distances. The reason for this belief is that optical flow as a visual cue does not provide information on distance per se, but on the ratio of velocity divided by height. In contrast, I argue that distances still play an important role in optical flow control. In 2016, I have published an article on this matter in Bioinspiration and Biomimetics. In particular, I analyzed constant optical flow divergence landing and uncovered that robots that do not change their “control gains” (the strenght of their reactions) will always start to oscillate at a specific height above the landing surface. This self-induced control instability at first sight seems troublesome, since it will always happen at some height along the landing trajectory. However, this problem of oscillation can be turned into a strength! If the robot can detect that it is self-inducing oscillations, it can know its height! In fact, it will not perceive height in meters but in terms of the control gain that will be ideal for high-performance optical flow control. Hence, robots can use this theory as well to find out what control gain is best for performance. For instance, for high-performance optical flow landing, the robot should adapt its control gains to the height all along the landing. This finding is not only relevant to robots, but also to biology, generating novel hypotheses on how they perform optical flow control. More information can be found here.

Whereas the use of optical flow in landing and collision avoidance is evident, I recently also proposed that it is important for attitude estimation. Drones determine their attitude, i.e., their orientation with respect to gravity, with the help of accelerometers. However, insects do not have such a sense. From biological experiments with falling hover flies it did become clear though that optical flow may play a role in attitude estimation and control. In a 2022 Nature paper, together with my co-authors, I showed that combining optical flow with a motion model is sufficient for determining attitude. Our mathematical analysis also showed that there are situations in which attitude cannot be observed. For example, when hovering still, the attitude cannot be determined. We provide a proof that this unobservability is actually not so much of a problem, as the system remains stable. However, intriguingly, it does lead to slightly more “wobbly” flight.

Selected publications:

(2022), De Croon, G.C.H.E., Dupeyroux, J.J., De Wagter, C., Chatterjee, A., Olejnik, D.A., & Ruffier, F. Accommodating unobservability to control flight attitude with optic flow. Nature, 610(7932), 485-490.

(2021), de Croon, G.C.H.E., De Wagter, C., and Seidl, T. Enhancing optical-flow-based control by learning visual appearance cues for flying robots. Nature Machine Intelligence, 3(1), 33-41.

(2018), Ho, H.W., de Croon, G.C.H.E., van Kampen, E., Chu, Q.P., and Mulder, M. Adaptive Gain Control Strategy for Constant Optical Flow Divergence Landing. IEEE Transactions on Robotics, 34(2), 508-516. (pdf – arxiv), (official version)

(2018), Lee, S.H., and de Croon, G.C.H.E. Stability-Based Scale Estimation for Monocular SLAM. IEEE Robotics and Automation Letters, 3(2), 780-787. (official version)

(2016), de Croon, G.C.H.E., Monocular distance estimation with optical flow maneuvers and efference copies: a stability based

strategy, in Bioinspiration and Biomimetics, vol. 11, number 1. (pdf)

(2015), de Croon, G.C.H.E., Alazard, D., Izzo, D., “Controlling spacecraft landings with constantly and exponentially decreasing time-to-contact”, IEEE Transactions on Aerospace and Electronic Systems, April 2015, 51(2), pages 1241 – 1252, (original).

(2013), de Croon, G.C.H.E., and Ho, H.W., and De Wagter, C., and van Kampen, E., and Remes B., and Chu, Q.P., “Optic-flow based slope estimation for autonomous landing”, in the International Journal of Micro Air Vehicles, Volume 5, Number 4, pages 287 – 297. (draft – pdf) (Bibtex)

Neuromorphic processing

Many of the recent successes in Artificial Intelligence have been achieved thanks to the use of deep neural networks. Especially in the area of visual perception, deep neural networks clearly outperform other more traditional methods. However, current embedded deep neural network processors (Graphical Processor Units, GPUs) are both heavy and power-hungry, precluding their use on small autonomous robots like tiny drones.

Luckily, help is on the way: The burgeoning technology of neuromorphic processing mimics the asynchronous and sparse nature of processing in animal brains, with the aim of providing light-weight, fast and extremely energy-efficient neural network processing. Neuromorphic processors allow to implement Spiking Neural Networks (SNNs) in hardware. These networks have a temporal dynamics more similar to biological neurons. The neurons have a membrane voltage that varies over time and they send information to other neurons by means of spikes when the voltage goes over a threshold.

Neuromorphic processing will reach its full potential when combined with neuromorphic sensing. Notably, neuromorphic cameras are a great match. These cameras do not make frames at a fixed time interval, but have pixels that independently send on information when they experience a brightness change. The ON (brighter) and OFF (darker) events coming from these cameras arrive asynchronously, leading to delays in the order of micro-seconds. These events can be fed directly to a spiking neural network to perform complex visual tasks both fast and very efficiently.

The challenges in the area of neuromorphic sensing and processing are still daunting: Due to the richer temporal dynamics and binary activations, SNNs are currently still harder to train than conventional neural networks. Moreover, the neuromorphic sensing and processing hardware is still much less mature than conventional cameras and GPUs, which means that it is still very difficult to embed them on autonomous robots, especially flying ones.

My main goal in this area is to develop the algorithms that will allow even small drones to tackle complex tasks with neuromorphic sensing and processing. Below are some selected publications, which include the first use of a neuromorphic camera for controlling a flying drone (Hordijk et al. 2018) and the first use of a neuromorphic processor in the control loop of a flying drone (Dupeyroux et al. 2021). There are also two studies on spiking neural network processing of camera events for determining optical flow and ego-motion.

Selected publications:

(2021), Hagenaars, J., Paredes-Vallés, F., and de Croon, G.C.H.E. Self-supervised learning of event-based optical flow with spiking neural networks. Advances in Neural Information Processing Systems (NeurIPS), 34, 7167-7179.

(2021), Dupeyroux, J., Hagenaars, J. J., Paredes-Vallés, F., and de Croon, G.C.H.E. Neuromorphic control for optic-flow-based landing of MAVs using the Loihi processor. In 2021 IEEE International Conference on Robotics and Automation (ICRA) (pp. 96-102). IEEE.

(2019), Paredes-Vallés, F., Scheper, K.Y.W., and de Croon, G.C.H.E. Unsupervised learning of a hierarchical spiking neural network for optical flow estimation: From events to global motion perception. IEEE transactions on pattern analysis and machine intelligence, 42(8), 2051-2064.

(2018), Pijnacker Hordijk, B. J., Scheper, K.Y.W., and de Croon, G.C.H.E. Vertical landing for micro air vehicles using event‐based optical flow. Journal of Field Robotics, 35(1), 69-90.

Self-supervised learning

Learning is very important for animals, and it is commonly accepted that it will be very important to future robots as well. However, most current autonomous robots are completely pre-programmed. There are multiple reasons for this. For example, reinforcement learning methods work by trial-and-error, using the reward signals from the different trials as learning signals. The problem is that typically many trials are necessary for learning (in the order of thousands), something which is undesirable on most real robots. Definitely, robots such as the DelFly would not be able to learn in this way. A different learning method is imitation learning, in which a robot typically learns from how a human performs a task. This in turn requires quite some effort on the part of the human supervisor. Wouldn’t it be great if a robot could learn completely by itself?

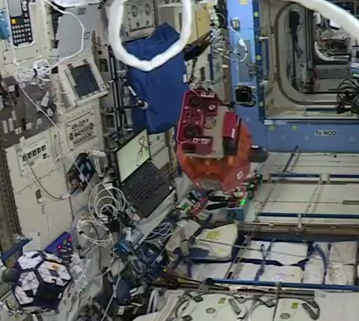

In self-supervised learning (SSL), a robot teaches itself how to do something it already knows. This sounds strange of course, because why would a robot want to learn something it already knows? An example may help here. The picture shows the SPHERES VERTIGO robot, which is equipped with a stereo system (two cameras), with which it can see distances. The robot uses the stereo vision distances to teach itself to also see distances with a single, still image. After learning, it can then also navigate around the International Space Station with one camera – just in case things go really wrong. We performed the experiment with the SPHERES robot together with ESA, MIT, and NASA on the International Space Station in 2015, making it the first ever learning robot in space!

Over the years, I have studied different case studies, showing how SSL can extend a robot’s capabilities without risky trial-and-error learning. Learning is typically very fast, as it is supervised and as it can build on ample amounts of data coming from the robot’s sensors. This is especially important for data-hungry methods such as deep neural networks.

Selected publications:

(submitted) Paredes-Vallés, F., Scheper, K.Y.W., De Wagter, C., and de Croon, G.C.H.E. Taming Contrast Maximization for Learning Sequential, Low-latency, Event-based Optical Flow. arXiv preprint arXiv:2303.05214.

(2018), Martins, D., van Hecke, K., and de Croon, G.C.H.E., Fusion of stereo and still monocular depth estimates in a self-supervised learning context, In 2018 IEEE International Conference on Robotics and Automation (ICRA) (pp. 1-8). IEEE. (draft – pdf)

(2016), van Hecke, K., de Croon, G.C.H.E., Hennes, D., Setterfield, T. P., Saenz-Otero, A., & Izzo, D. (2016). Self-supervised learning as an enabling technology for future space exploration robots: ISS experiments. At the International Astronautical Congress (IAC 2016).

(2016), Lamers, K., Tijmons, S., De Wagter, C., and de Croon, G.C.H.E., Self-Supervised Monocular Distance Learning on a Lightweight Micro Air Vehicle, at IROS 2016.

(2015), H.W. Ho, C. De Wagter, B.D.W. Remes, and G.C.H.E. de Croon, “Optical flow for self-supervised learning of obstacle appearance”, in the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2015)

Evolutionary Robotics

Small animals like insects perform complex tasks by linking together simple behaviors in a smart manner. For instance, male moths are able to find female moths by means of their odor. In turbulent air, this odor source finding problem is extremely challenging. The male moth successfully finds the female by (roughly) employing the following straightforward strategy. If it does not smell the female’s scent, it performs a “casting” maneuver. This implies flying to and from, perpendicular to the wind. If it does capture the scent, the moth flies up-wind. It does so until it looses the scent, after which it starts casting again.

The smart behavioral routines exhibited by animals may not be suitable for a robot, which has different sensing and actuation capabilities. Still, I would like robots to follow similarly smart, efficient behavioral strategies. To this end, I study the use of evolutionary robotics, in which robot controllers are not designed by the roboticist, but designed by an artificial evolutionary process. Some of my work is tuned to specific applications, e.g., how can a robot find an odor source? I also focus on improving the methodology of evolutionary robotics, and then especially on ways in which evolved controllers can be successfully employed by real robots (crossing the gap between simulation and the real world) and increasing the complexity of tasks that can be tackled with evolutionary robotics.

Selected publications:

(2020) Hagenaars, J.J., Paredes-Vallés, F., Bohté, S.M., de Croon, G.C.H.E., Evolved Neuromorphic Control for High Speed Divergence-based Landings of MAVs, in IROS 2020. (pdf arxiv version)

(2020), Scheper, K.Y.W. and de Croon, G.C.H.E., Evolution of robust high speed optical-flow-based landing for autonomous MAVs., Robotics and Autonomous Systems 124, 103380. (pdf arxiv version)

(2017), Scheper, K.Y.W., and de Croon, G.C.H.E. Abstraction, Sensory-Motor Coordination, and the Reality Gap in Evolutionary Robotics., in Artificial Life, volume 23, number 2, p. 124-141. (pdf – draft)

(2016), Scheper, K.Y.W., Tijmons, S., de Visser, C.C., and de Croon, G.C.H.E., “Behaviour Trees for Evolutionary Robotics”, Artificial Life. (draft) Winter 2016, Vol. 22, No. 1, Pages 23-48.

(2013), de Croon, G.C.H.E., O’Connor, L.M., Nicol, C., Izzo, D., “Evolutionary robotics approach to odor source localization”, in Neurocomputing, Volume 121, 9 December 2013, Pages 481–497 (draft – pdf) (Bibtex)